Here are the slides for an invited talk I gave at my alma mater, U.C. Santa Barbara’s Center for Spatial Studies (spatial@ucsb) on October 27th — virtually of course. I have annotated them with speaker notes, outlined with dashed red lines.

Here are the slides for an invited talk I gave at my alma mater, U.C. Santa Barbara’s Center for Spatial Studies (spatial@ucsb) on October 27th — virtually of course. I have annotated them with speaker notes, outlined with dashed red lines.

Category: Linked Places

linkedplaces.draw (part 2)

In Part 1 of this posting, I introduce the linkedplaces.draw (LPDraw) tool and briefly explain its motivation. This is a pilot software project, and has been put together fairly quickly to support historical mapathons we are undertaking at the University of Pittsburgh’s World History Center (WHC). These will produce historical place data that can be contributed to the World Historical Gazetteer platform. Figure 1 illustrates most functionality of the tool itself. Figure 2 below shows some screens in the “Dashboard” section of the app.

We are testing this alpha draft version now, and it will undoubtedly undergo changes and additions in weeks to come. In time, I plan to package the tool so that anyone hand with Django and PostgreSQL can stand up their own instance. But all this is simply a demonstrator for a “proper” crowd-sourcing app, which to realize will require a) design, b) funding and c) developer(s). Please get in touch with ideas about that.

One piece of a puzzle

The WHC workflow that the LPDraw tool will play a part in goes like this:

- Identify one or several maps, data from which will be useful for some research domain—typically of a particular region or region/period combination. These could be historical maps downloadable from an online resource like David Rumsey Map Collection, or via Old Maps Online. Or they could be paper maps, possibly from a print historical atlas.

- Georeference each map image, resulting in a GeoTIFF file for each.

- We use QGIS for this; there are other options

- Create an xyz map tileset for each GeoTIFF. We are using MapTiler software for this. Note tileset details like the minimum and maximum zoom and bounds.

- Upload the tileset(s) to a web-accessible location

- Register as a user in the LPDraw app

- Create a project record and map records for each individual map in the project

- Identify, for the project as a whole or for each map, the feature types to be digitized, and a timespan representing temporal coverage of the project and/or each individual map.

- Feature types will be presented as options in the interface, and timespans will be automatically added to each digitized feature (if that option is checked in the map record)

- Feature types are not by default restricted to a particular geometry; points, lines, and polygons are all options

- Assign other registered users as collaborators able to create and edit features for the project.

- In the “draw” screen, choose a project and map from dropdown menus. The map loads and digitize features as desired.

- Use the opacity control to view the underlying map, as an assist to proper placement

- Enter a name or names in the popup, according to the LP-TSV format convention, e.g. separating variants by a ‘;’

- Download options available so far are Linked Places format (GeoJSON-compatible) and TSV.

linkedplaces.draw (part 1)

In Part 2, I describe the functionality in linkedplaces.draw to date. At some point, a collaboratively authored functional spec for a ‘proper’ crowd-sourcing tool will come together on GitHub.

I have been building a pilot tool for digitizing features from georeferenced historical maps (and maps of history such as found in historical atlases), tentatively named linkedplaces.draw. Its immediate intended use is for what we at the University of Pittsburgh World History Center have been calling “historical mapathons.” We are planning to facilitate, stage and encourage such events, in which individuals and small groups can virtually gather to harvest temporally scoped features from old maps, for their immediate use and for preparing contributions to World Historical Gazetteer (WHG) [1]. We have begun testing it by digitizing features for settlements, archaeological sites, regions, dynasties, and ethnic groups from the highly regarded “An Historical Atlas of Central Asia” (Bregel 2005).

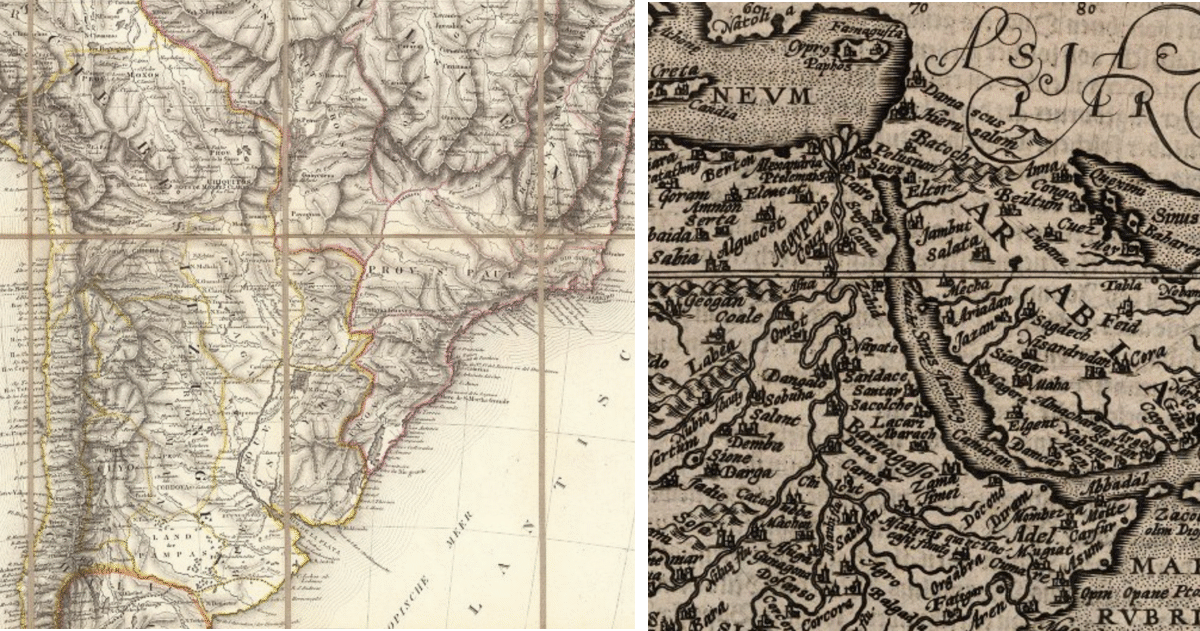

Maps v. Texts as Gazetteer Sources

Old maps represent a largely untapped storehouse of information about geographies of the past. Digitizing features from maps that can be geo-referenced (a.k.a. geo-rectified, or warped) without too much distortion provides an estimated geometry that is invaluable. The most immediate use scenario driving development of WHG is mapping place references in historical texts and tabular datasets. But to make a digital map you need geometry, however approximate. Discovering suitable coordinates for lists of place names drawn from historical texts is far and away the most difficult and time-consuming task in this scenario. If your source texts are of a region and period it makes sense to build a gazetteer of that region and period using maps made at the time–and then to contribute the data to WHG so no one has to ever do it again! Certainly, the feasibility of deriving useful geometry in this way is reduced the further back in history one goes.

Maps made prior to the 18th century–beautiful, instructive, and useful as they may be–normally don’t have sufficient geodetic accuracy for this purpose (Fig. 2b). Obtaining geometry for place references made in earlier periods will require a different approach, e.g. capturing topological relations like containment. Also, one can also digitize features from maps of history, as we are doing with the Bregel atlas mentioned above.

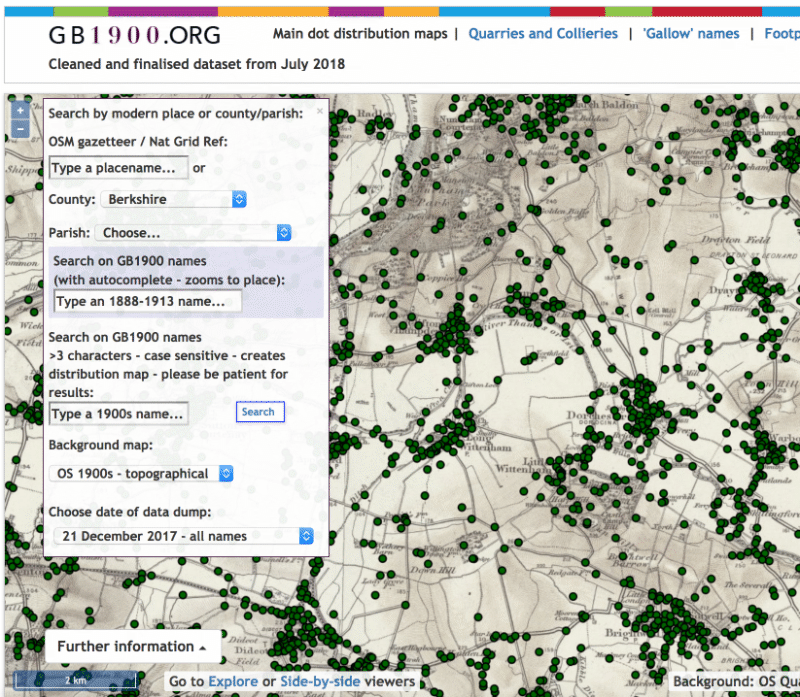

The GB1900 Proof-of-Concept

Although hundreds of thousands of old maps have been scanned and made available for viewing and download by map libraries around the world [2], the names and estimated coordinates of features on them have not been transcribed in any quantity. The recent project GB1900 provided a successful proof-of-concept (cf. “The GB1900 project–from the horse’s mouth“). Crowd-sourcing map transcription software was custom built for the public at large to work with a single Ordnance Survey map, and over a period of months millions of names and geometries were digitized. Preliminary results can be viewed at http://geo.nls.uk/maps/gb1900/; analytical products are sure to follow. Unfortunately the software used for GB1900 cannot be re-purposed for other maps and general use.

Unfortunately, although the code for the GB1900 crowd-sourcing software is available, it is not re-usable; at least I and others have been unable to revive it. Hence, linkedplaces.draw, which will hopefully serve as a demonstrator that could be used in finding funds to build a sturdy open-source platform that can be used by groups of any size–including “the crowd”–to do this valuable work.

Related Tasks and Software

Several existing free software packages and web sites provide users the capability to perform these tasks related to old map feature digitization, in some combination: a) georeference a map image and save the rectified result as a GeoTIFF, b) create web map tilesets from a GeoTIFF file, and c) display single images or tilesets as overlays on modern web base maps. Typically viewers for these provide an opacity control, allowing comparison of old and modern geography. This is all great, but what is missing is the capability to draw or trace features from the rectified images.

Coda (for the moment)

For years, computer scientists and others have explored the possibility of automated feature extraction. There are a few such efforts under way right now. I wish them godspeed, and do believe machine methods will ultimately be able to extract a list of names from some relatively recent map series having especially clear cartography, but also that they will never handle maps like Figure 2a, and will never successfully extract the estimated geometry of even point features. Yes I know, never say never. In the meantime…

[1] The World Historical Gazetteer project is building a web platform for developing, publishing, and aggregating data about places drawn from historical sources by members of the broad community of interest studying the past within and across numerous disciplines. A Version 1 launch is planned for June/July 2020. See the About pages and Tutorials as http://dev.whgazetteer.org for details.

[2] The extraordinary David Rumsey Map Collection has many extended features and direct hi-res downloads; Old Maps Online is a “gateway to historical maps in libraries around the world.”

User Stories for the World-Historical Gazetteer

My work designing and developing the World-Historical Gazetteer (WHGaz [1]) is under way. This NEH‑funded 3‑year project is based at the University of Pittsburgh World History Center and directed by Professor Ruth Mostern. David Ruvolo is Project Manager, and Ryan Horne will contribute in his new post-doc role at the Center. I’m very pleased to serve as Technical Director, working from Denver.

The project actually comprises more than a gazetteer. An official description of the project’s goals and components is forthcoming; in the meantime, its deliverables include:

A gazetteer, defined in the proposal as “a broad-but-shallow work of common reference consisting of some tens of thousands of place names referring to places that have existed throughout the world during the last five hundred years.”

Interfaces to the gazetteer, including

- a public API;

- a public web site providing graphical means for data discovery, download, and visualization, and serving as a communication venue for the community of interest;

- a web-based administrative interface for adding and editing data

An “ecosystem”, described as “a growing and open ended collection of affiliated spatially aware world historical projects,” seeded by two pilot studies concerning the Atlantic World and the Asian Maritime World

Models, formats, vocabularies. The conceptual and logical data models, data formats (e.g. GeoJSON-T), and controlled vocabularies (e.g. place types) developed for the project will be aligned with solid existing resources and published alongside data

Documentation. Software developed for the project will be maintained in a public GitHub repository. Additional documentation will be produced in the form of research reports published on the website and scholarly articles appearing in relevant journals.

What, for whom, and why

One of our first steps is developing “user stories” for the project, an element of the Agile development method that is a simple and effective way of capturing high-level requirements from users’ perspectives. I polled developers of some of our cognate projects (Pelagios, PeriodO, Pleiades) and added ideas stemming from their experiences to my own in creating the following preliminary list. If you can think of others that aren’t accounted for, please add them in a comment or email me. In my own streamlined version of Agile (Agile-lite?), user stories lead more or less directly to schematic representations of features supporting functions, then to coding. Evidence of streamlining is found in the detail already in place under items 18 and 19 (thanks, Ryan Shaw).

The next appearance of the features suggested by these stories will be in ordered lists of GitHub “issues” – coming soon.

Users

user: anyone of the following

researcher: academic or journalistic

editor: of WHGaz data

developer: anyone building software interfaces to WHGaz services

hobbyist: amateur historians, genealogists, general public

teacher: at any level

User stories

- As a {user}, I want to {view results of searches for place names in a map+time-visualization application} in order to {discover WHGaz contents}

- As a {user}, I want to {discover resources related to a search result} in order to {learn more about the place and available scholarship about it}

- As a {user}, I want to {learn about the WHGaz project: its motivations, participants, methods, work products, timeline} in order to {determine its quality and relevance to my purposes; see where my tax dollars are going}

- As a {user}, I want to {suggest additions to the WHGaz} in order to {make the resource more complete/useful}

- As a {researcher} I want to {publish my specialist gazetteer data for ingest by centralized index(es)} in order to {make my data discoverable by place and optionally, by period}

- As a {researcher} I want to {search a geographic area (i.e. space rather than place)} in order to {find sources relating to places in this area}

- As a {researcher} I want to {find historical source documents, incl. by keyword search} in order to {identify which places they refer to}

- As a {researcher} I want to {compare historical sources} in order to {see how they might be related to another through common references to place}

- As a {researcher} I want to {compare the geographical relationships (and names) represented in ancient texts with historical and modern representations}

- As a {researcher/developer} I want {different options for re-using data (from data downloads, to APIs and embeddable widgets} in order to {enrich my own work/online publication}

- As a {researcher/developer} I want to {locate individual or multiple authority record identifiers for toponyms tagged in source material} in order to {find related research data}

- As a {researcher/developer}, I want to {retrieve WHGaz data in any quantity (filtered set, complete dump) according to multiple search parameters, using web form(s) or a RESTful query} in order to {re-use the data for any purposes, according to WHGaz license terms}

- As a {researcher/developer}, I want to {learn how to construct API queries} in order to {incorporate WHGaz data in my analyses/software}

- As a {researcher/hobbyist} I want to {embed a WHGaz map in a wordpress blog}

- As a {researcher/hobbyist} I want to {display places and movements (!) presented in specific texts} in order to {understand the spatial-temporal context of a text}

- As a {teacher} I want {quick lookup tools linked to authoritative information} in order to {use the data in teaching}

- As an {editor}, I want to {add and edit place records} in order to {make the WHGaz resource more complete/accurate/useful}

- As a {developer} I want to {query WHGaz programmatically, returning GeoJSON/GeoJSON-T features in JSON lines format, each having 1) a “properties” object including (a) an identifier, (b) one preferred label and one or more alternate labels (w/optional language tags), (c) name and URLs of the gazetteers to which it belongs; 2) a geometry object; and 3) a “when” object describing temporal extent} in order to {use external gazetteer data in the my (PeriodO) client interface}

- Allow querying by:

- providing text to be matched against feature labels

- specifying a rectangular bounding box (option to include all intersecting features or only those contained within it

- Allow querying by:

- As a {developer} I want to {query WHGaz as above via a GUI, with option to filter results by gazetteer} in order to {browse and/or download records}

- Entering text into the text input should display a list of matching feature labels, in sections titled by gazetteer name

- Hovering results list should display/highlight feature on map; zoom to feature (?)

- Selecting a particular result from the list should raise popup with info about it

- The map display needs to support custom tile sets including the Ancient World Mapping Center’s.

[1] WHGaz is an unofficial short form used in this post; official naming will undoubtedly ensue